In my last post, I explained my efforts since September 2020 to get key documents related to the Literacy and Numeracy Test for Initial Teacher Education students (LANTITE) released publicly. That post focuses on the ongoing review by the Australian Information Commissioner of the Department of Education’s decision to refuse access to LANTITE technical and administration reports.

On November 29, 2024, I received some further correspondence from an Assistant Review Adviser in the Office of the Australian Information Commissioner (OAIC). They informed me that the OAIC “are actively working to progress” the review to the “significant decisions team.” Which sounds like they may be getting quite close to making a final decision as to whether the LANTITE reports should be released. Hurray!

The Assistant Review Adviser also sent me further submissions from the Department of Education and the Australian Council for Educational Research (ACER). I will discuss their submissions in this post and how I responded.

Reliability and validity

The OAIC asked the Department of Education to respond to my argument that publication of technical reports “is seen within the educational measurement profession as meeting an ethical obligation to provide evidence that the technical quality, including reliability and validity, of the test meets its intended purpose’ (Cizek and Rosenberg, 2011, p. 236, quoting the Code of Fair Testing Practices in Education, JCTP, 1998).”

In response, the department listed the following documents as satisfying “any ‘ethical obligation’ to provide evidence of the technical quality, reliability and validity of the LANTITE”:

- The LANTITE Assessment Framework 2024

- The Described Proficiency Scale for LANTITE

- ‘Practice material and test taking strategies available on the ACER website‘

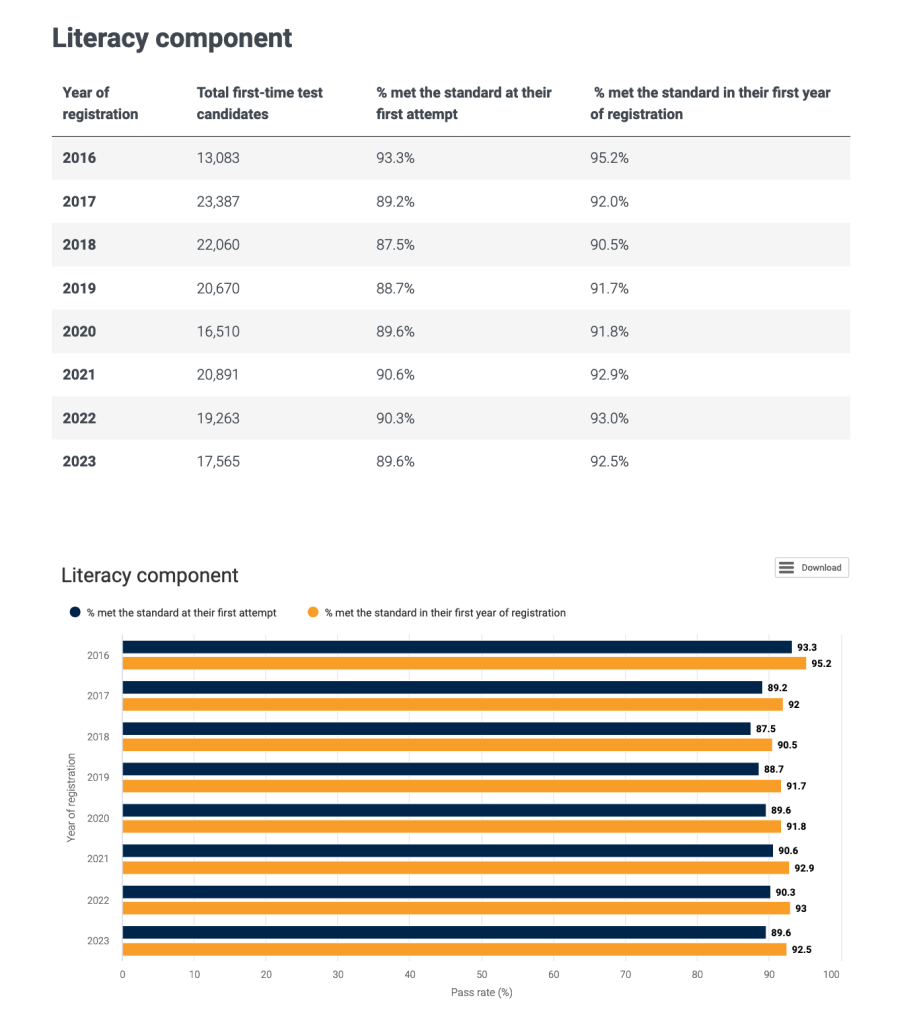

- “Extensive data regarding the number of test candidates who have attempted the LANTITE and the percentage who have met the required standard, broken down by literacy and numeracy components, year and whether candidates met the required standard on their first attempt or in their first year of registration,” available on the department’s website.

In my own response to the department’s claims, I first provided some additional background information on standard educational measurement practices relating to the validity and reliability of credentialing tests. Credentialing tests “are intended to provide the public, including employers and government agencies, with a dependable mechanism for identifying practitioners who have met particular standards” (American Educational Research Association, American Psychological Association, & National Council on Measurement in Education [AERA, APA, & NCME], 2014, p. 175). To help the OAIC understand standard educational measurement practices, I referred to the Standards for Educational and Psychological Testing, a document “widely considered to be the authoritative source for best testing practices in education and psychology” (Cizek & Rosenberg, 2011, p. 212). In a section devoted to tests for credentialing purposes, the Standards state that

defining the minimum level of knowledge and skill required for licensure or certification is one of the most important and difficult tasks facing those responsible for credentialing. The validity of the interpretation of the test scores depends on whether the standard for passing makes an appropriate distinction between adequate and inadequate performance. … Verifying the appropriateness of the cut score or scores on a test used for licensure or certification is a critical element of the validation process. (AERA, APA, & NCME, p. 176)

In this passage, the Standards make clear that analysis of test scores is critically important when it comes to ensuring that a test such as LANTITE is valid for credentialing purposes. Evidence that LANTITE cut scores (i.e., the minimum score to be achieved by a test-taker to be deemed adequately literate or numerate) are appropriate should be shared with the public to ensure the “transparency that measurement professionals, educators, and the general public expect” (NAPLAN 2013 Technical Report, p. 1) and to build public confidence that LANTITE results are reliable, valid, and fair.

Of the documents listed by the department, none of them discusses the reliability of LANTITE results. The Assessment Framework does discuss content validity (i.e., whether the individual test items relate to relevant aspects of literacy and numeracy) but only refers to data gathered in 2016 and 2017 and does not address the ‘critical element’ of the appropriateness of cut scores.

As for the department’s claim that “extensive data” is available on their website, this is all the data provided on the Literacy component of LANTITE for eight years:

In comparison with the documentation provided for NAPLAN in just one year, the description of this LANTITE data as “extensive” is difficult to take seriously.

Protecting ACER’s competitive advantage

Some time back, the department explained to me one of the reasons they would not release the LANTITE technical documents:

ACER is a private entity engaged by the department to administer the LANTITE and to collect and collate data for the purpose of internal analysis of LANTITE test participation and performance. … release of the data would reveal ACER’s methods of data analysis and collection. … disclosure of this material would have a detrimental effect on ACER’s commercial interests because the information would be advantageous to their competitors.

I argued in October that ACER had already disclosed substantial information about its data collection and analysis methods in the NAPLAN 2023 Technical Report. In their most recent submission to the OAIC, ACER acknowledged that “some of the analytic methodologies and software used to conduct analyses are the same” for both LANTITE and NAPLAN. At the very least, then, material in the LANTITE technical reports related to these methods and software should be available for public scrutiny.

But ACER have tried to justify withholding all material related to LANTITE analytic methodologies on the basis that LANTITE and NAPLAN are different. They highlight real differences in:

- Demographic characteristics of the test-takers

- The frequency of test administrations

- Uses of test results.

They have not, however, explained why these differences are relevant to considerations about sharing information about their LANTITE methodology with the public.

ACER claim in their submission that “the LANTITE technical reports exist in a structure where such information cannot be reported without at the same time supplying the confidential information outlined” and that “publication of the methodologies necessarily reveals confidential information in cases like LANTITE.” They do not, however, provide any further explanation or justification of either claim, and do not explain why LANTITE technical reports could not be written in an alternative structure which would not risk supplying the confidential information.

ACER claim that “the privacy and ethical considerations differ considerably” between LANTITE and NAPLAN. This claim does not follow in any obvious way from their descriptions of the technical reporting practices of the two testing programs, and they do not provide the argumentation required to establish a link between them. In other words, the fact that the reporting practices differ does not mean that the privacy and ethical considerations also differ.

ACER state that “the reports for NAPLAN are intended to be public facing whereas for LANTITE the opposite is the case.” This is not in dispute. The issue is that the reports for LANTITE should be public facing, and the reason for the lack of transparency remains unclear, despite multiple submissions from ACER and the department. Similarly, the department state that the LANTITE technical reports “contain far more information regarding ACER’s methodologies and procedures than is contained in the NAPLAN documents.” This, again, amounts to a mere description of a difference in practices rather than a credible justification as to why there is a difference.

In conclusion, the submissions from ACER and the department serve to highlight ways in which LANTITE technical reporting practices diverge from standard educational measurement practice illustrated by the case of NAPLAN without actually providing credible justification for this divergence.

Protecting the privacy of individual test-takers

The department argued earlier on in the FOI process that the LANTITE Data Access Protocols preclude the publication of data that could identify individual test-takers or higher education providers (incidentally, these Protocols were only released publicly because of another FOI request that I made).

In their October 2024 submission to the OAIC, the department says that the Protocols “are related to the access and use of LANTITE data, not the technical or administration reports themselves.” This suggests that the Protocols relate only to the raw data and not to the analysis or methodology provided in the reports. If that is the case, then it would follow that the Protocols cannot be used to justify withholding them from the public. This would be a surprising position for the department to take.

The department has also indicated that they agree with my “proposition that it is possible to publish test data without identifying individual test candidates, but submits that the documents requested by the applicant were not prepared on this basis. So why not ‘prepare documents on that basis’? The department still has not explained why they haven’t yet done this or indicated that they ever intend to do so.

Summary

The submissions from ACER and the department, as summarised by the OAIC, provide descriptive material which is of questionable relevance to my FOI request, and does not provide any further justification of the decision to withhold the LANTITE technical and administration reports from the public in their entirety.